The “I Am A Texan” Network: Coordinated monetisation of anti-protest disinformation

As we analysed the viewership of the 12 protest-related disinformation narratives, we identified a coordinated network of 9 Facebook pages with an audience of over 1.5 million followers. We are calling this the “I Am A Texan” Network, because the ‘I Am A Texan’ page is the oldest page with the most page likes in the network. In addition to possibly sharing administrators and coordination on content posting, this network also appears to have tried to monetise its referral traffic by driving viewers to Resurge.com, which is a website promoting a dietary supplement.

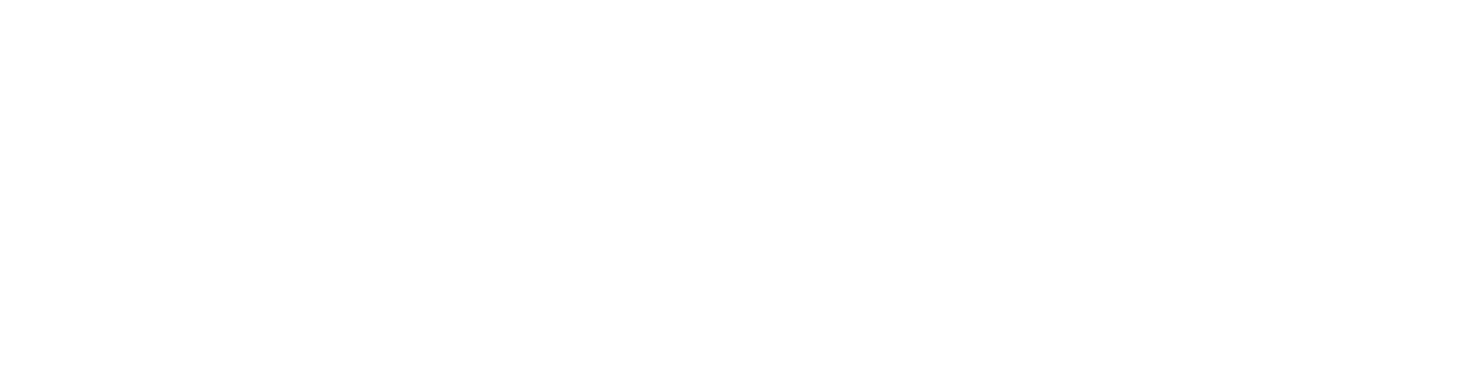

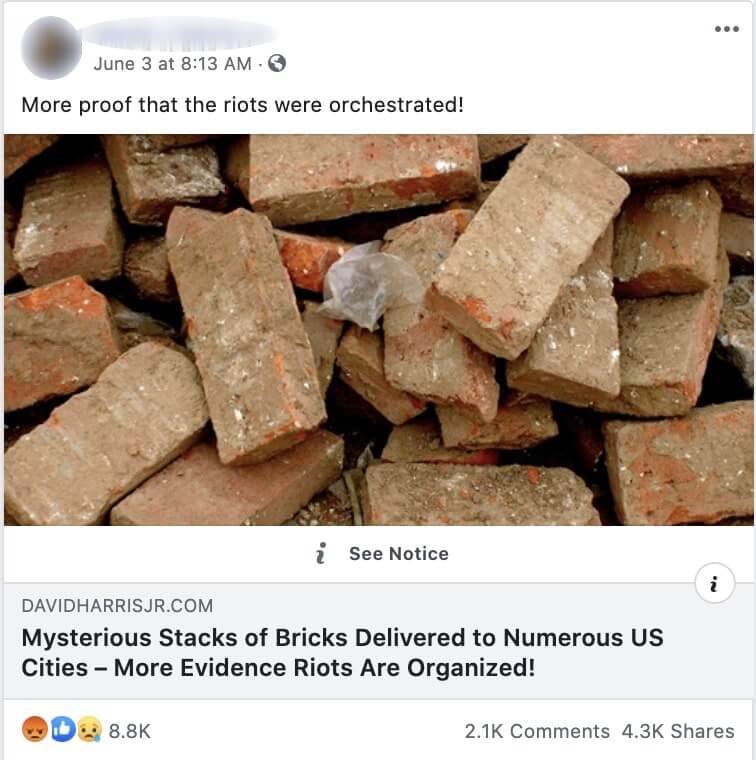

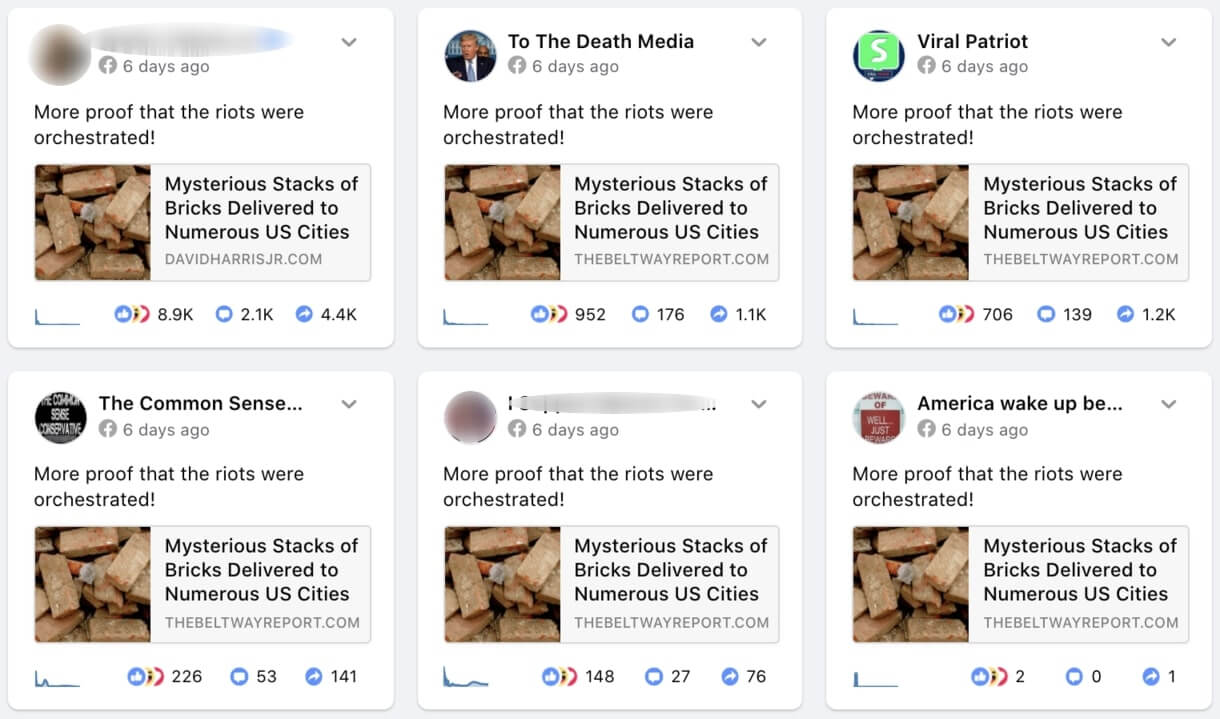

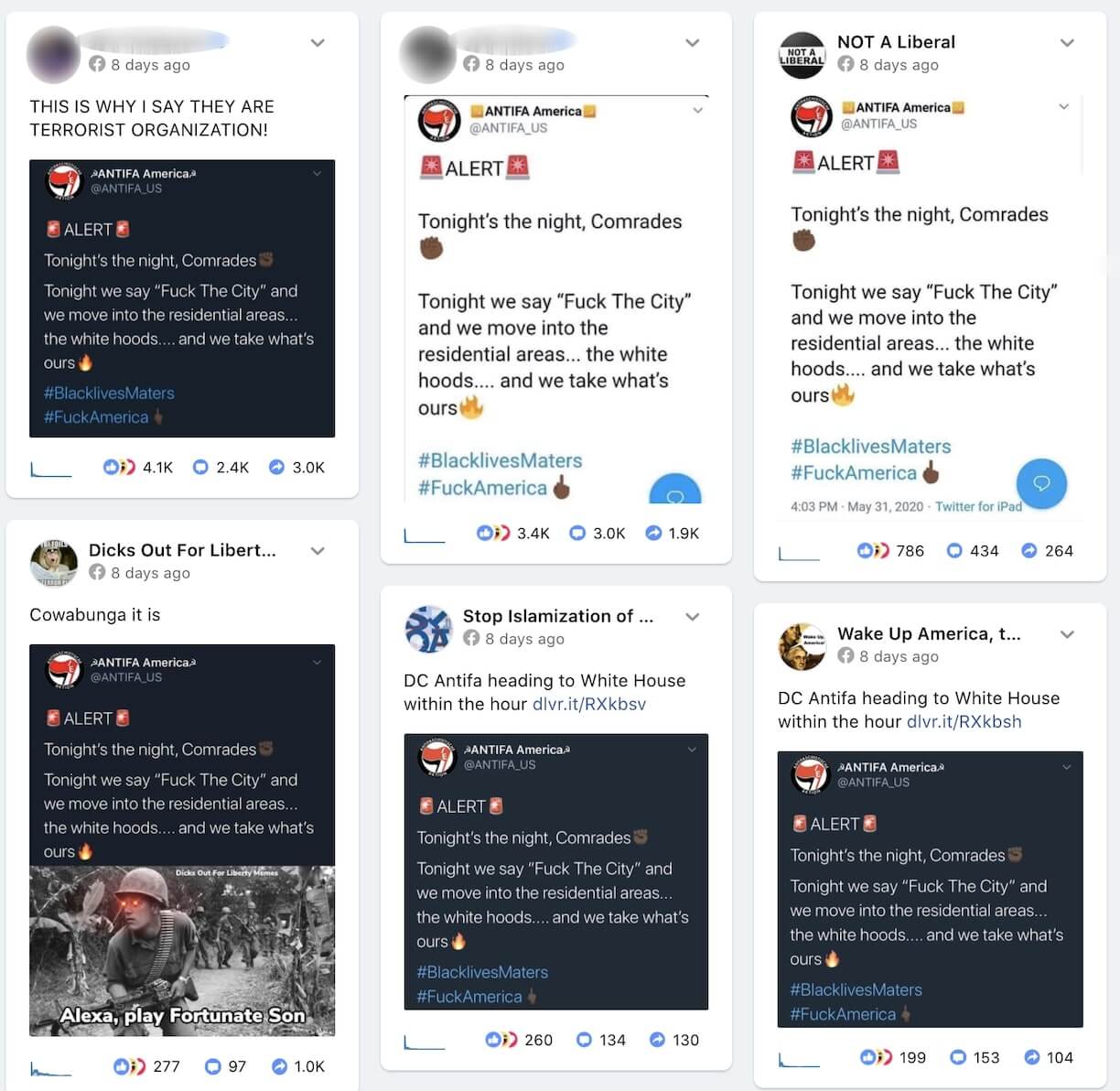

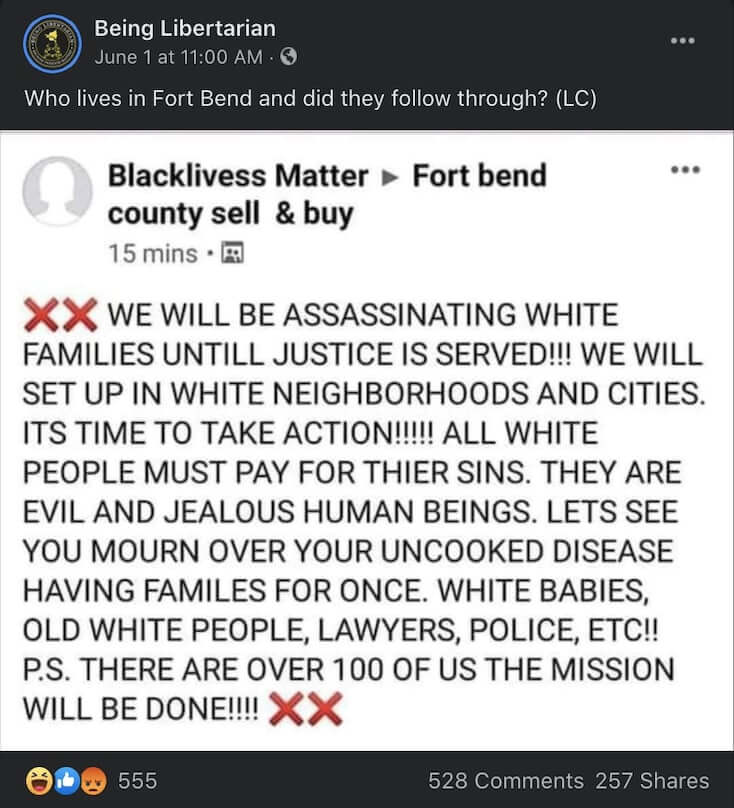

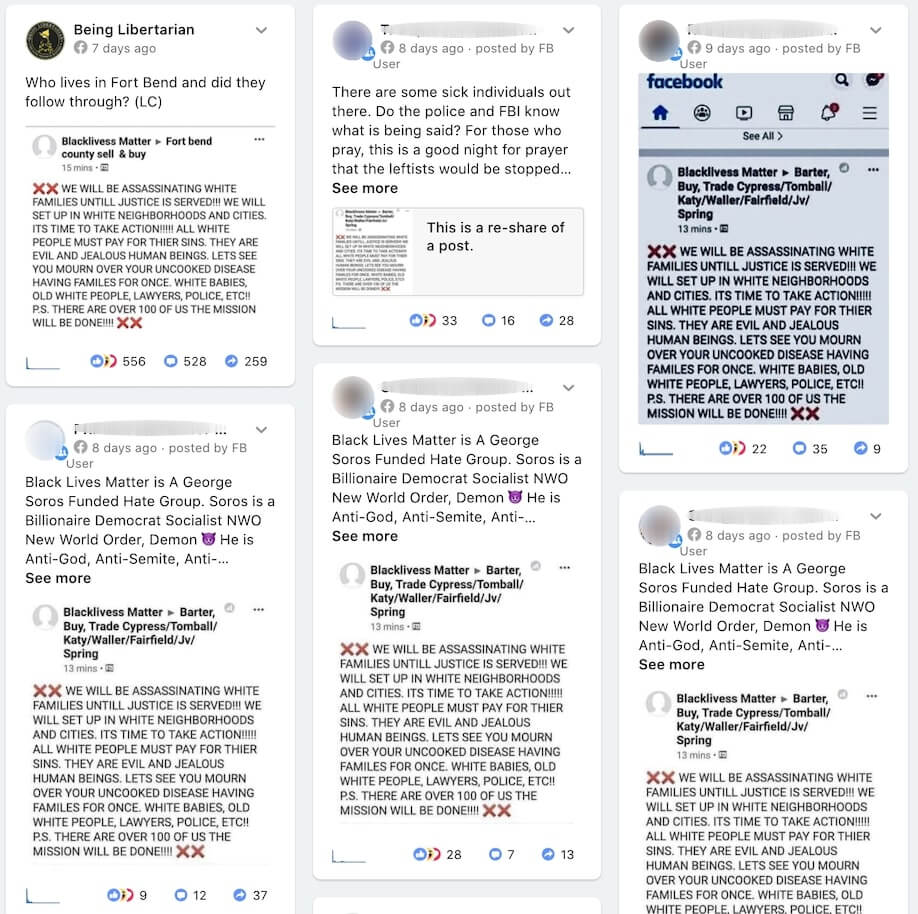

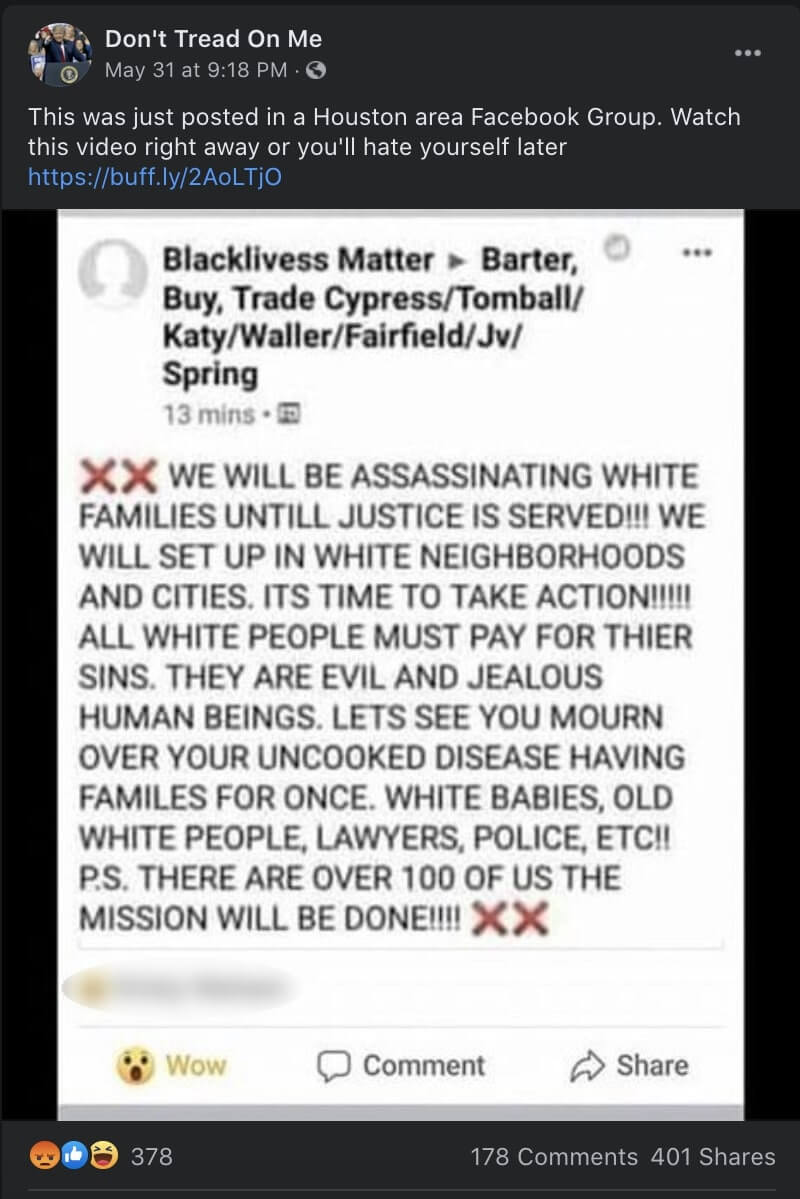

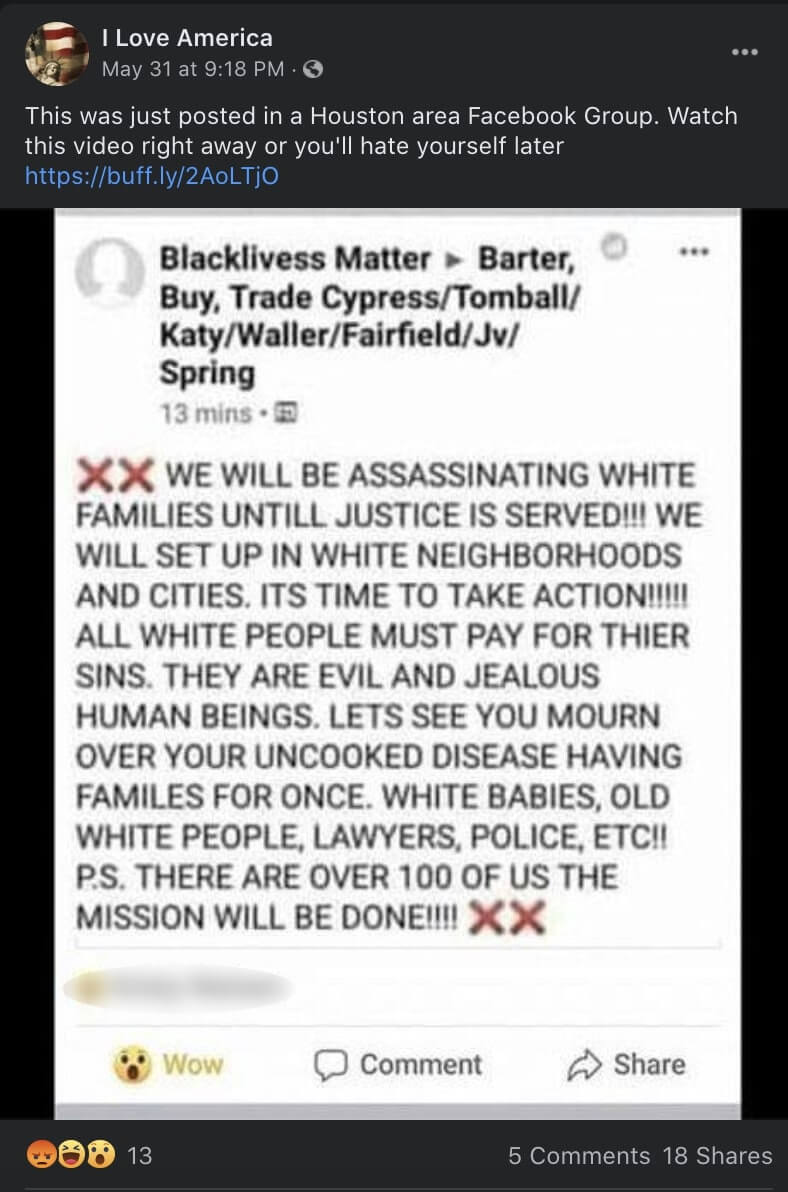

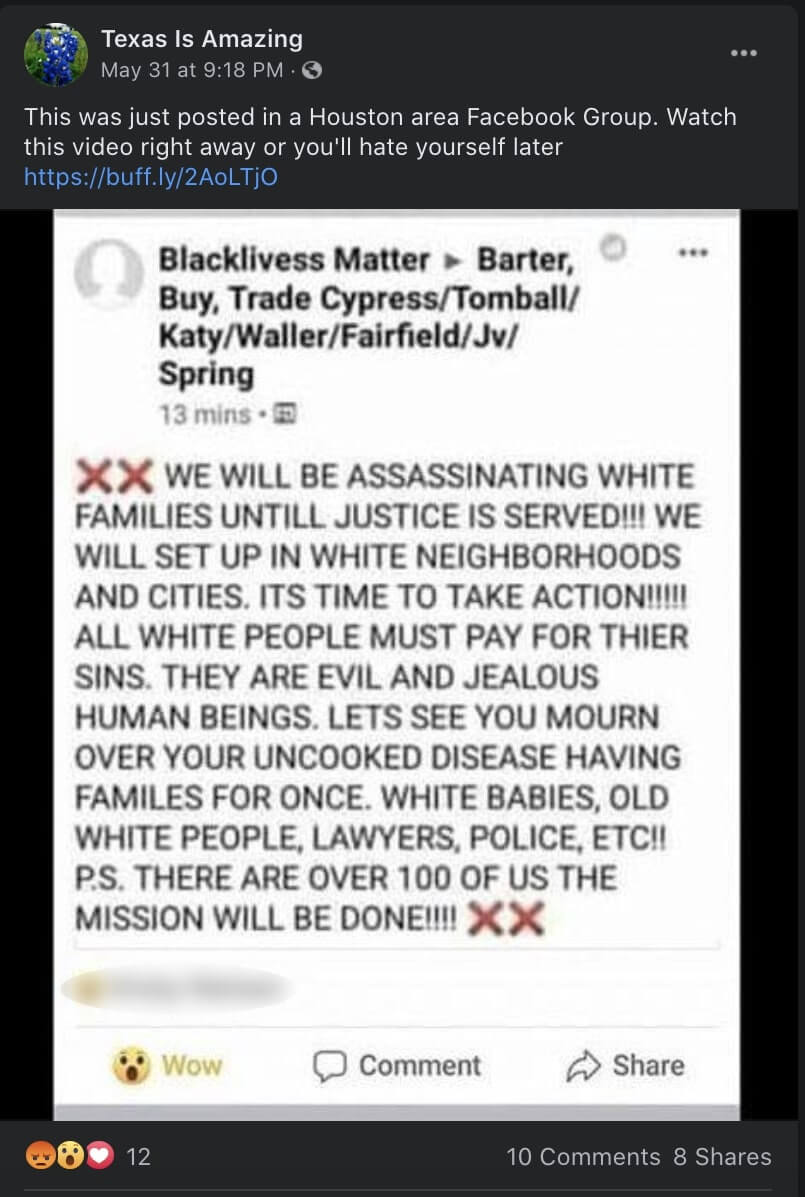

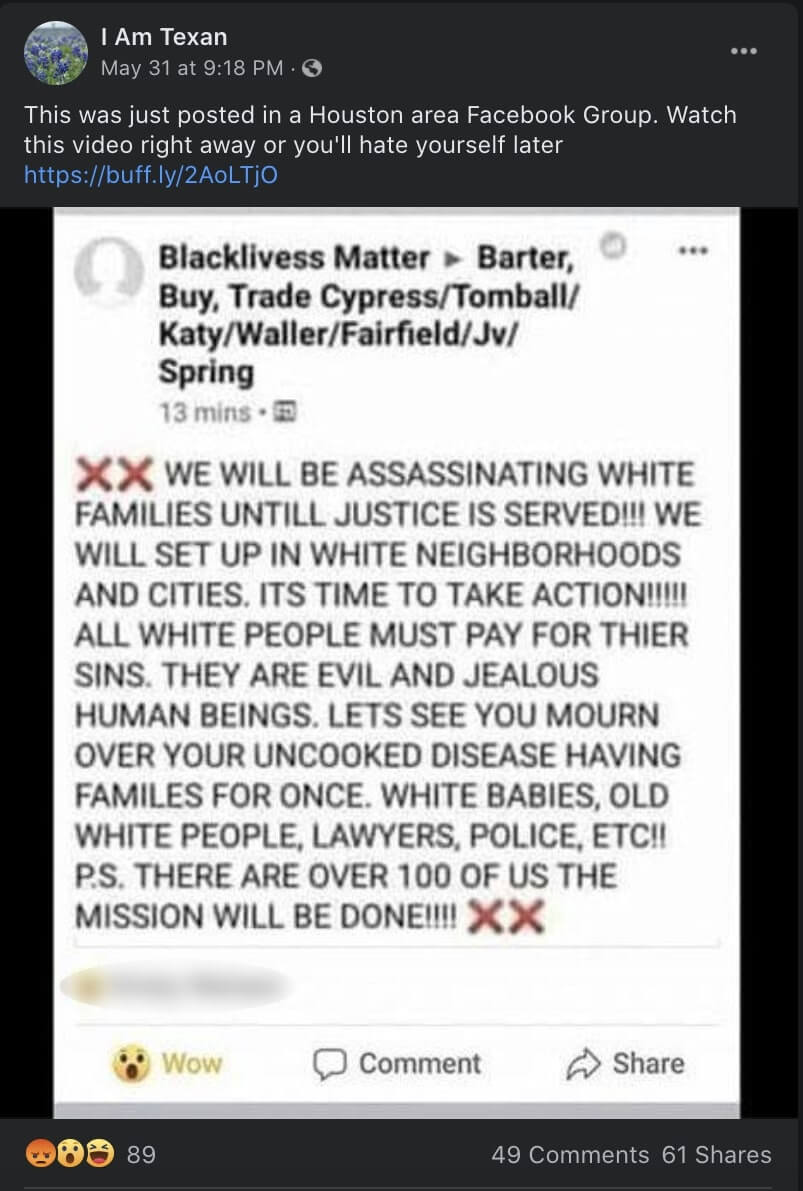

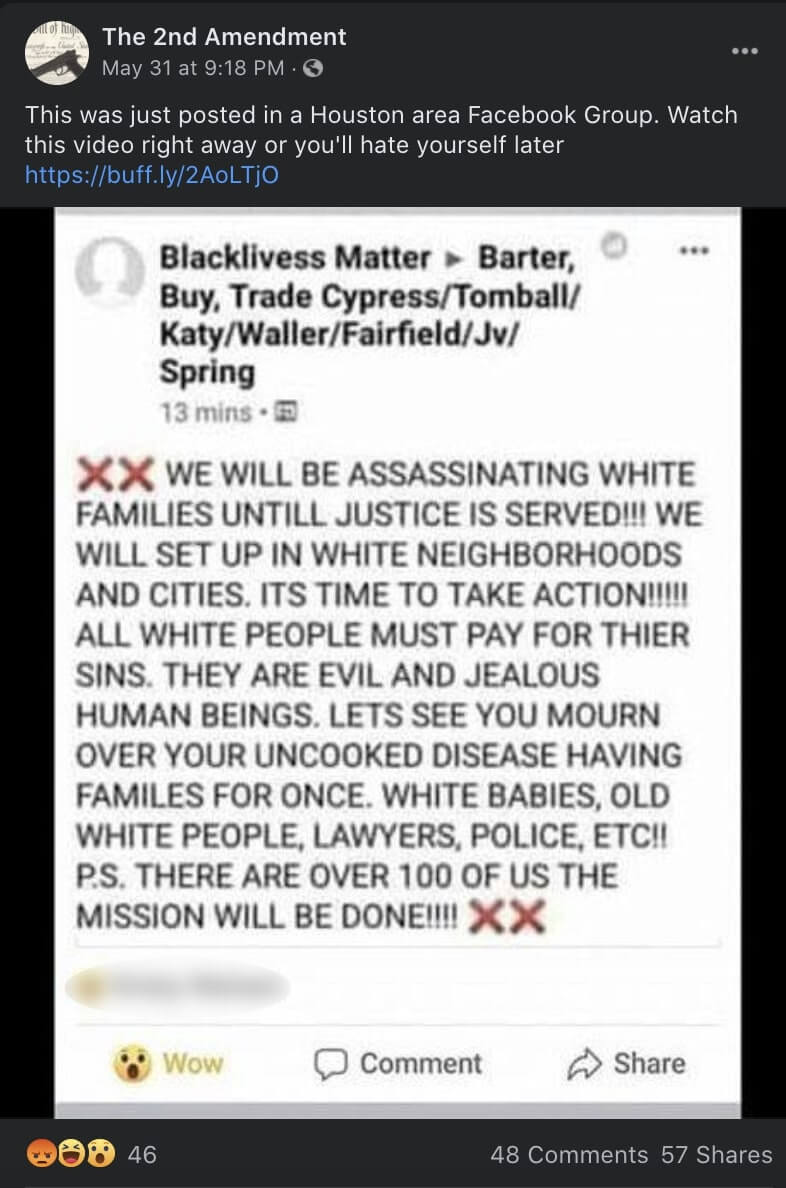

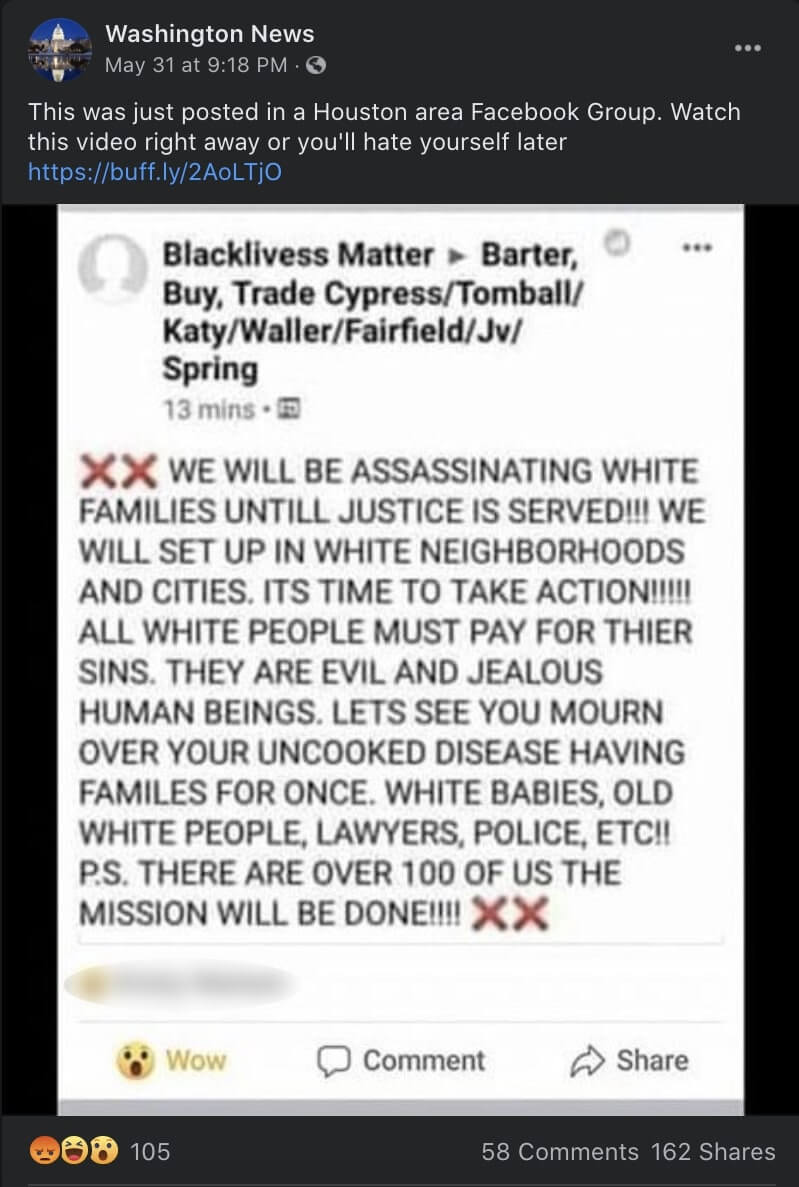

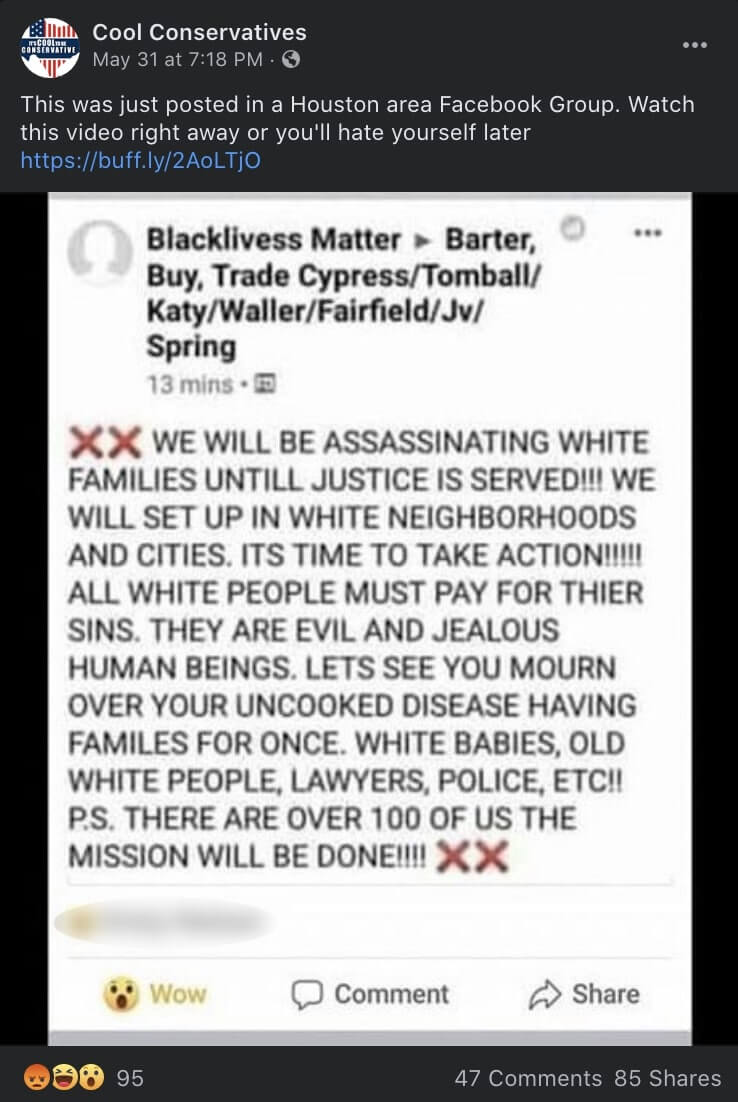

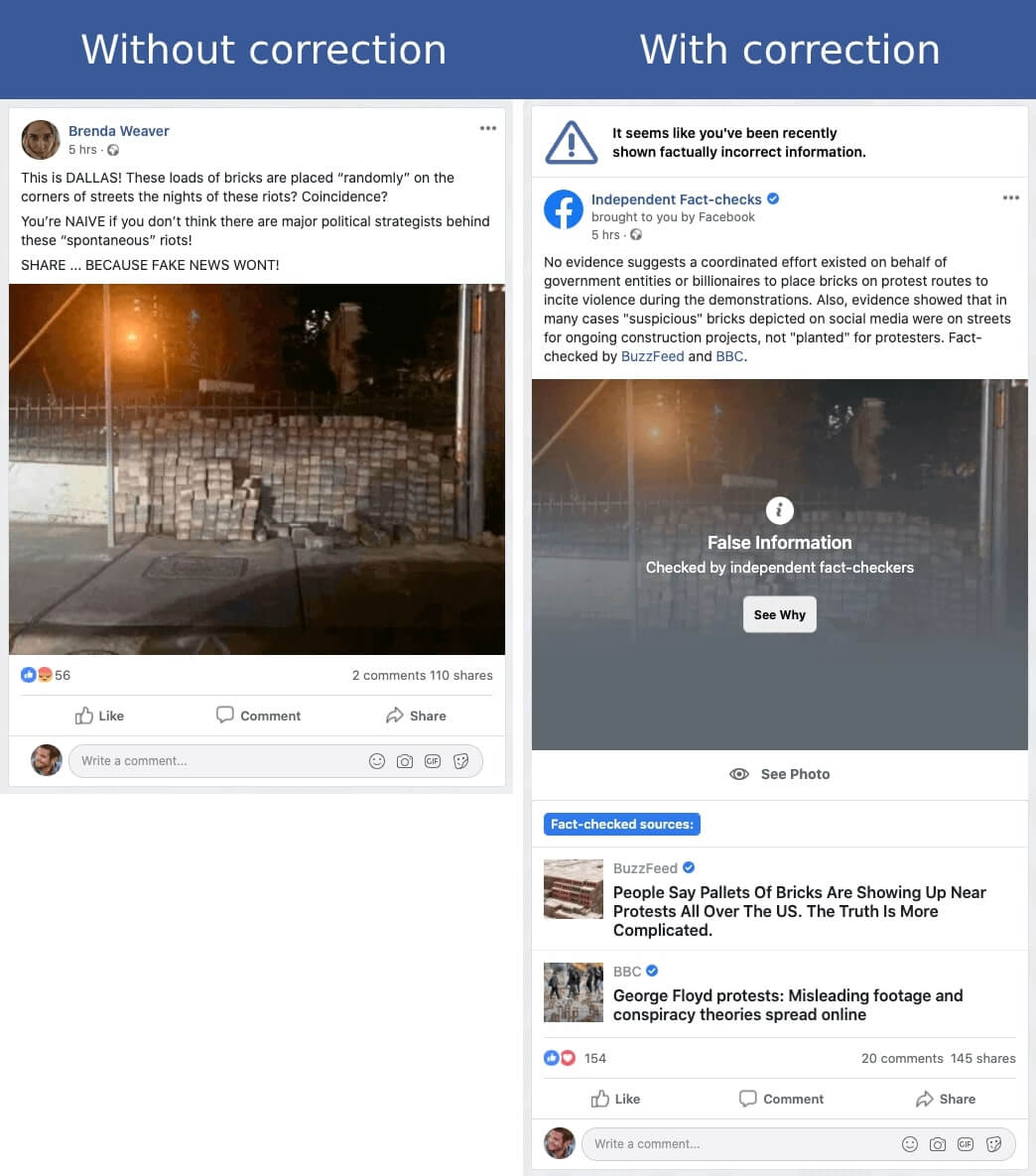

Between May 26, 2020 and June 8, 2020, this network promoted three of the disinformation narratives we analysed: that George Soros orchestrated the protests; that “Antifa” placed pallets of bricks at protest sites to stoke violence; and that “Black Lives Matter” had announced its intention to “assassinate white families.” The network regularly posts identical content to its pages within seconds of each other.

The 9 pages in the “I Am A Texan” Network that we identified are shown below.

To see all table data, please, scroll to the right

|

Page

|

URL

|

Created

|

Admins

|

Likes

|

Archives

|

|

Don't Tread on Me

|

https://www.facebook.com/letfreedomringyall/

|

September 30, 2012

|

United States (2), Germany (1)

|

518,654

|

http://archive.ph/ClPwy

|

|

I Am A Texan

|

https://www.facebook.com/BeautifulTexas/

|

June 6, 2012

|

United States (3), Germany (1)

|

520,929

|

http://archive.ph/4bchP

|

|

Cool Conservative

|

https://www.facebook.com/coolconservatives

|

December 6, 2018

|

United States (3), Germany (1)

|

17,788

|

http://archive.ph/G8Exz

|

|

The Second Amendment

|

https://www.facebook.com/The-2nd-Amendment-452832981470891/

|

May 3, 2013

|

United States (3), Germany (1)

|

156,066

|

http://archive.ph/mRN41

|

|

Start Draining America

|

https://www.facebook.com/startdrainingnow/

|

May 26, 2013

|

United States (3), Germany (1)

|

170,528

|

http://archive.ph/CltYH

|

|

Washington News

|

https://www.facebook.com/Washington-News-1583110238580443/

|

April 4, 2015

|

United States (2), Germany (1)

|

31,161

|

http://archive.ph/OPEDV

|

|

I Am Texan

|

https://www.facebook.com/iamtexan1/

|

July 16, 2015

|

United States (2), Germany (1)

|

69,332

|

http://archive.ph/Jr6jJ

|

|

I Love America

|

https://www.facebook.com/reclaimamericaforliberty

|

September 19, 2013

|

United States (7), Germany 1

|

44,941

|

http://archive.ph/8Csn0

|

|

Texas is Amazing

|

https://www.facebook.com/amazingtx/

|

November 16, 2014

|

United States (3), Germany (1)

|

23,806

|

http://archive.ph/DiQz6

|

|

|

|

|

Total Likes

|

1,553,205

|

|

A number of factors indicate that these pages are maintained by the same administrators. As you can see in the table above, the pages appear to share a set of administrators based in the US and Germany. Note that all of the pages have 1 admin in Germany, plus 2 to 3 in the US. The one exception is the page, “I Love America,” which has 7 US-based admins and 1 in Germany.

Further, the same items are frequently posted to several of the network’s pages within very tight time bounds, with identical post text and an identical Buff.ly shortlink.

For example, on 8 of the pages in the “I Am Texan” Network, Avaaz observed that the story of Black Lives Matter announcing its intention to “assassinate white families” was posted on each page within 11 seconds of each other, and they all included identical text and links. It appears that, in the last 24 hours, all but one of these posts have now been taken down. The rest of the network appears to still be live.

This table shows the 8 posts on the Network that have the false content claiming “Black Lives Matter activists intend to assassinate white families”

Tell Your Friends