As the coronavirus crisis started to spread across the globe, the World Health Organization (WHO) warned that “we’re not just fighting an epidemic; we're fighting an infodemic”. It cautioned that “fake news spreads faster and more easily than the virus, and it is just as dangerous”.1 [WHO, Munich Security Conference, 15 February 2020, https://www.who.int/dg/speeches/detail/munich-security-conference]

In response to the crisis, Facebook CEO Mark Zuckerberg and other company executives launched a media blitz to publicize the company’s expanded efforts to stop the spread of COVID-19 misinformation. Facebook announced that these efforts had been “quick,” “aggressive,” and “executed...quite well.”2 [CBS interview with Sheryl Sandberg: Facebook is removing fake coronavirus news "quickly", COO Sheryl Sandberg says, Facebook Announcement on: Combating COVID-19 Misinformation Across Our Apps, Mark Zuckerberg Press Briefing - March 18th, https://about.fb.com/wp-content/uploads/2020/03/March-18-2020-Press-Call-Transcript.pdf]

In February, our team began detecting and monitoring widespread misinformation about COVID-19 online. In March, our investigative team set out to analyse and assess the efficacy of Facebook’s efforts to combat this “infodemic” on its main platform.

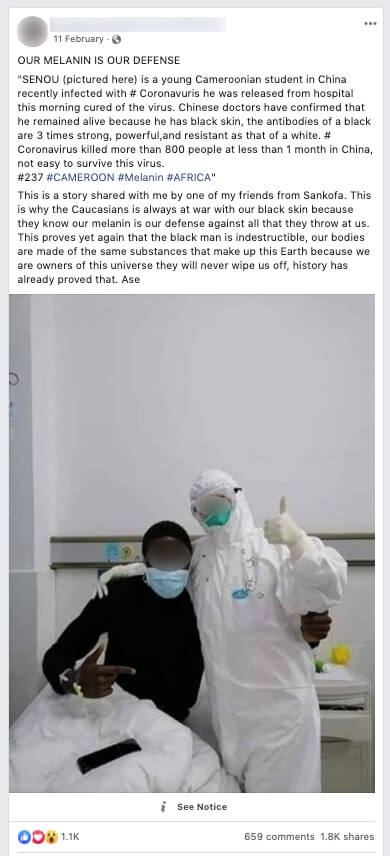

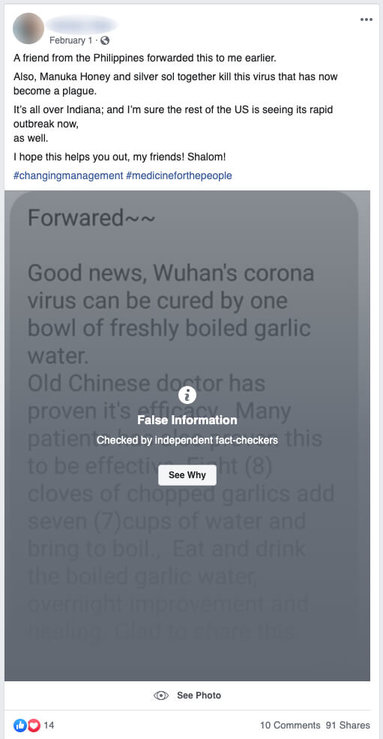

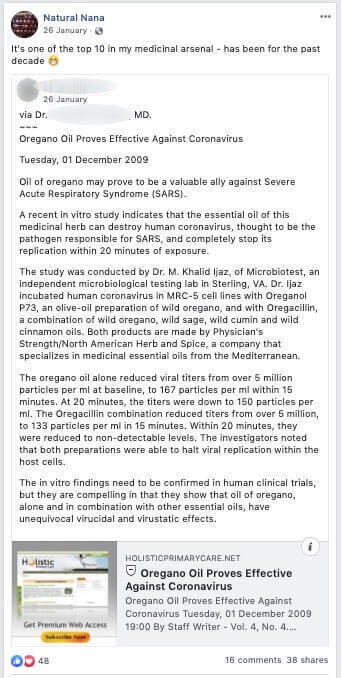

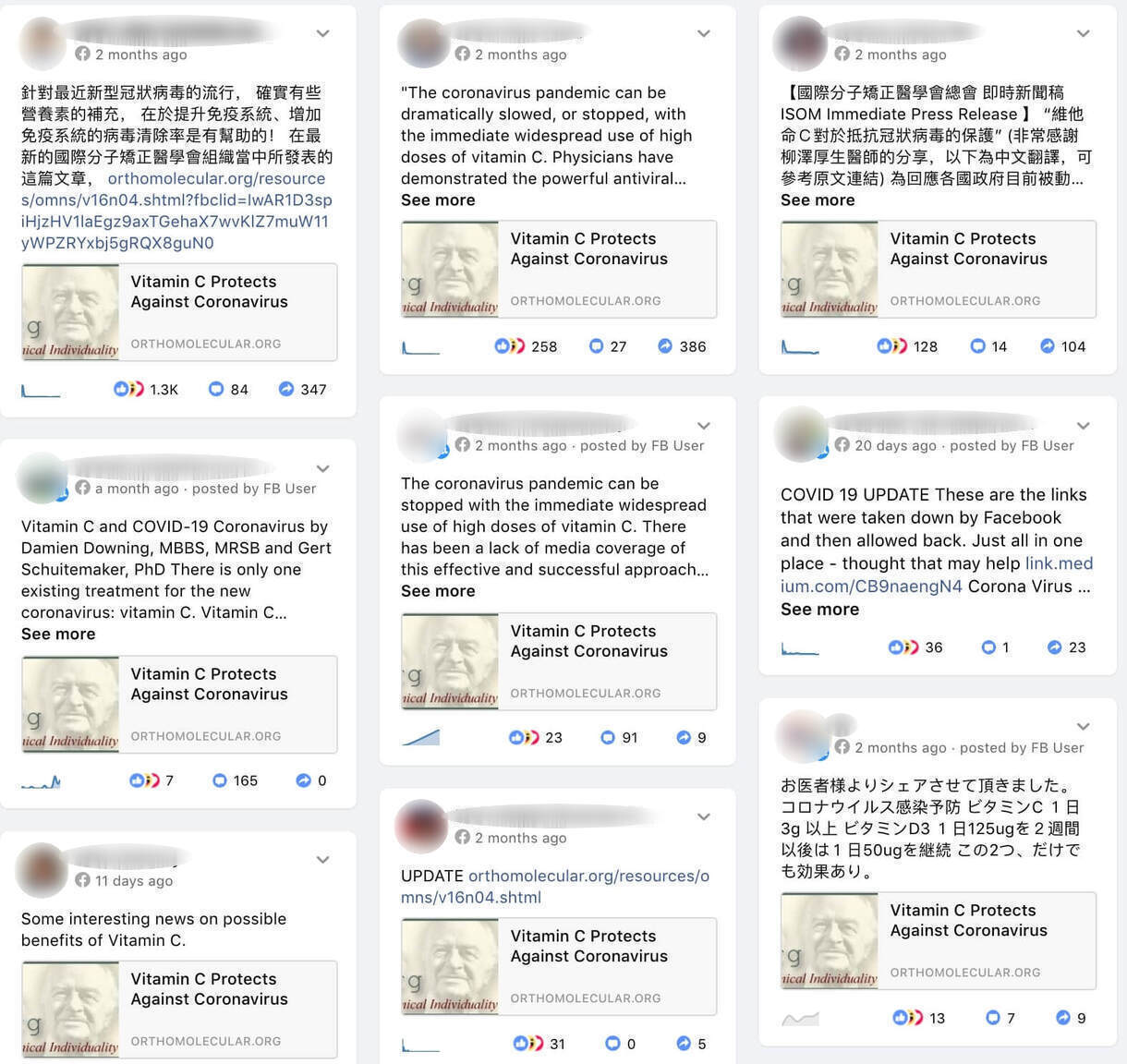

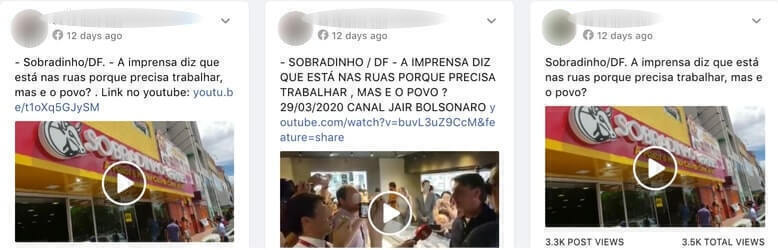

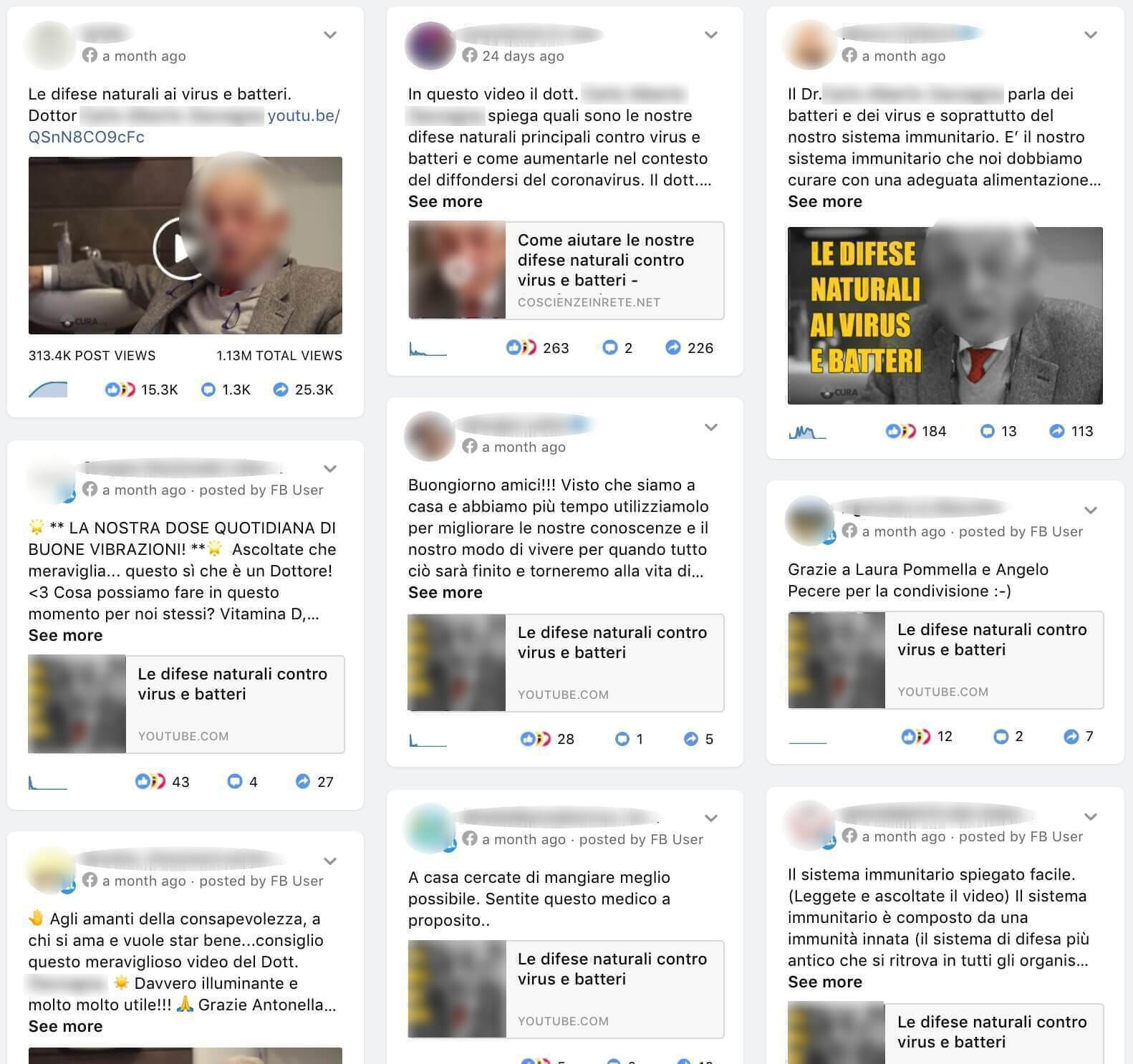

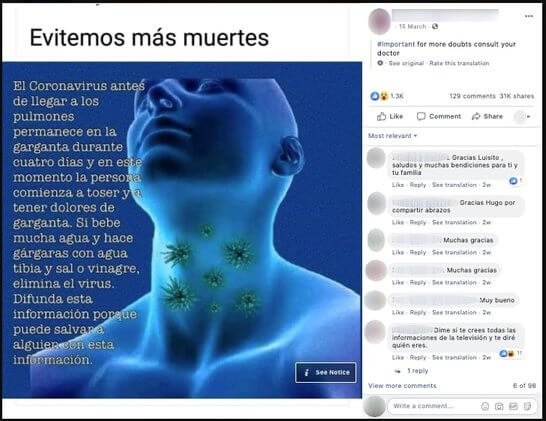

For this study, of the thousands of pieces of coronavirus-related misinformation content being shared on Facebook, we decided to examine over 100 pieces of misinformation content in six different languages about the virus that were rated false and misleading by reputable, independent fact-checkers and could cause public harm.3 [See Methodology and Data Set section for detail.]

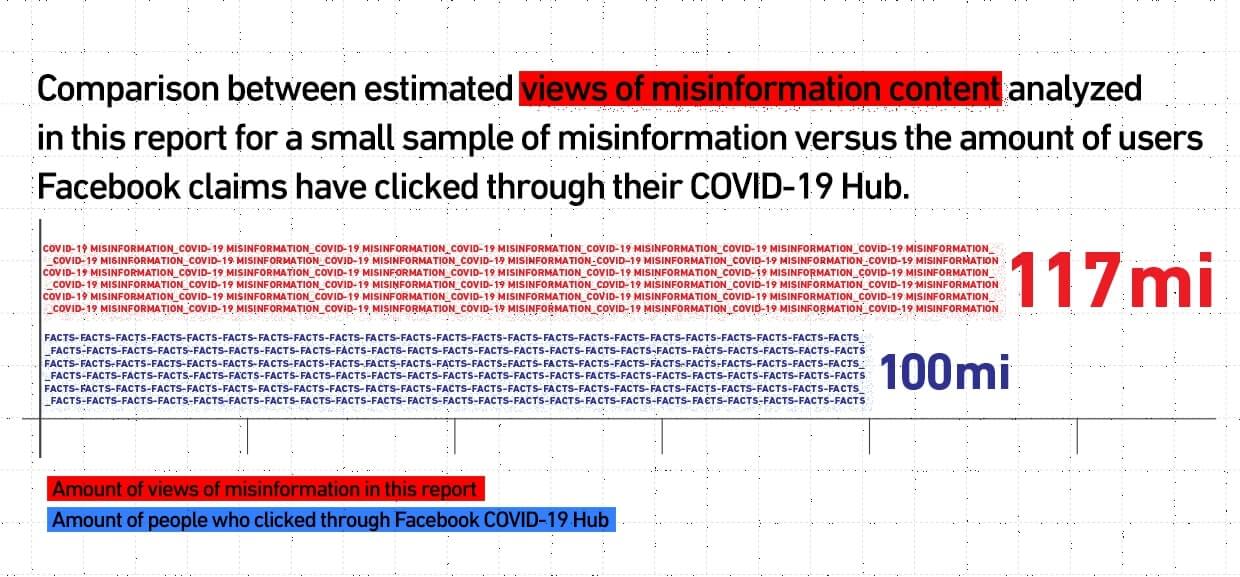

We found that millions of the platform’s users are still being put at risk of consuming harmful misinformation on coronavirus at a large scale. Representing only the tip of the misinformation iceberg, we found that the pieces of content we sampled and analysed were shared over 1.7 million times on Facebook, and viewed an estimated 117 million times.

Even when taking into consideration the commendable efforts Facebook’s anti-misinformation team has applied to fight this infodemic, the platform’s current policies were insufficient and did not protect its users.

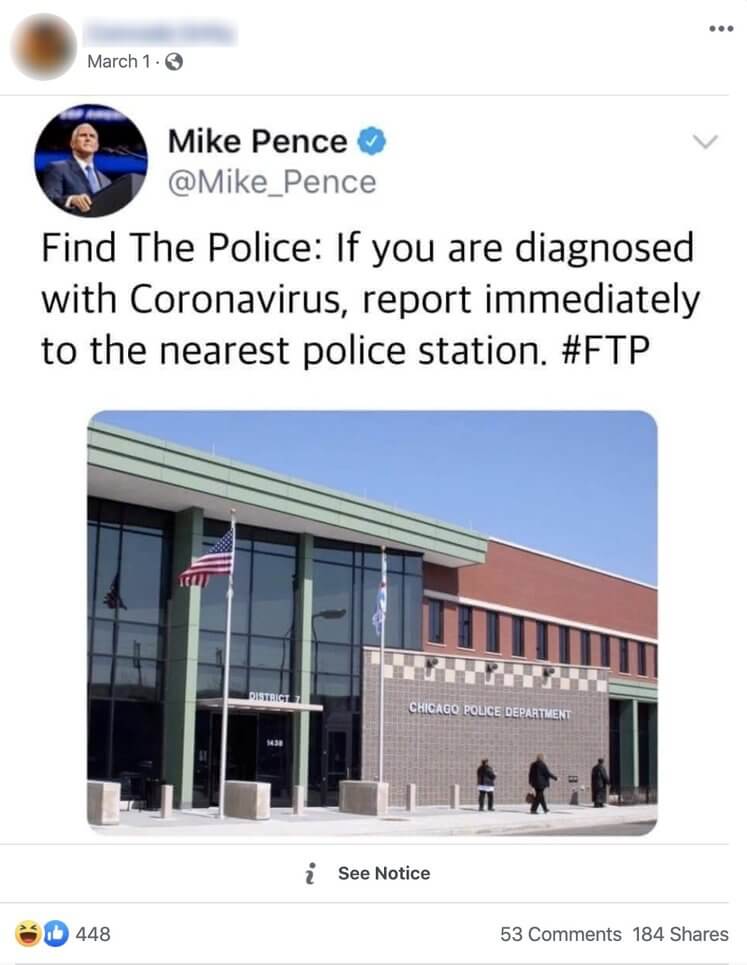

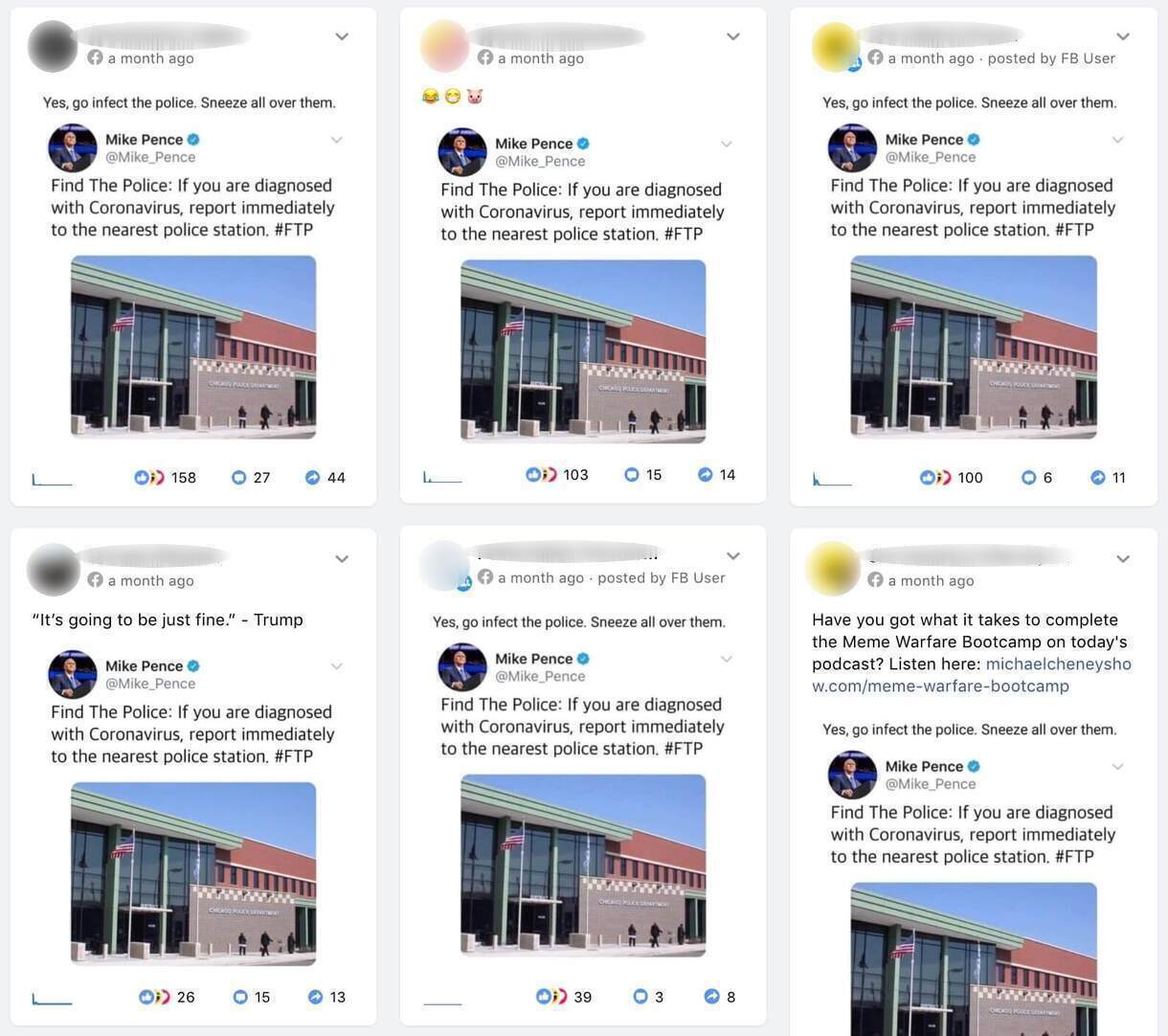

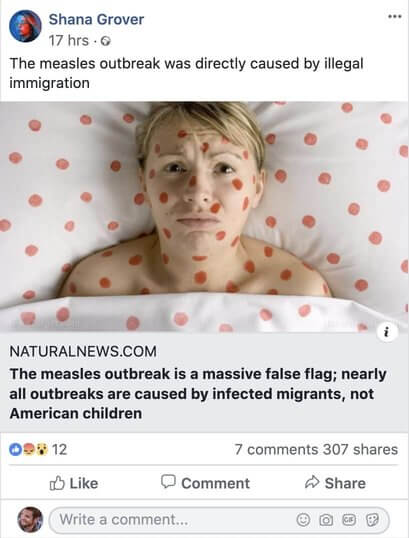

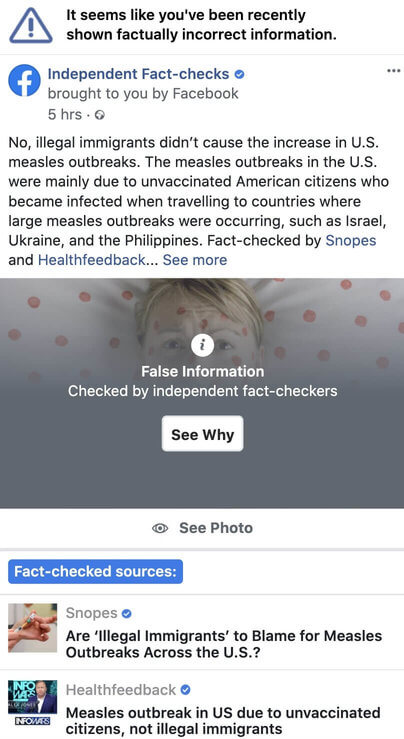

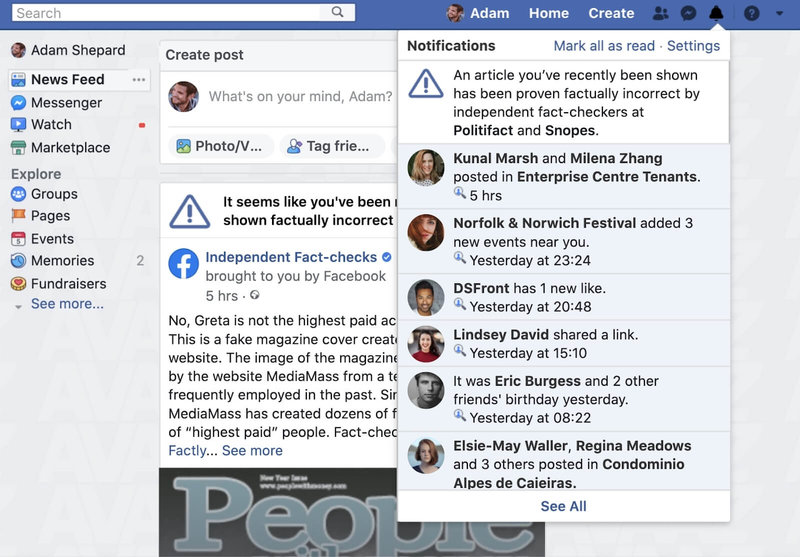

Of the 41% of this misinformation content that remains on the platform without warning labels, 65% has been debunked by partners of Facebook’s very own fact-checking program4 [For our study, we analysed only misinformation content that either had primary or direct fact checks - namely, fact checks that linked or referred to specific posts or articles). For those pieces of content that did not have primary or direct fact checks, we applied findings from primary fact checks to identical health claims made in those posts.]. Throughout the timeframe of our research5 [Our research period covered the actions and statements issued by Facebook between 16 January 2020 and 14 April 2020. However, based on conversations with representatives of the platform, we are encouraged by efforts the platform is experimenting with to improve the policies and processes that reflect some of the recommendations mentioned below.], this content remained on the platform despite the company’s promise to issue “strong warning labels” for misinformation flagged by fact-checkers and other third party entities, and remove misinformation that could contribute to imminent physical harm.6 [Facebook, Combating COVID-19 Misinformation Across Our Apps, 25 March 2020, https://about.fb.com/news/2020/03/combating-covid-19-misinformation/]

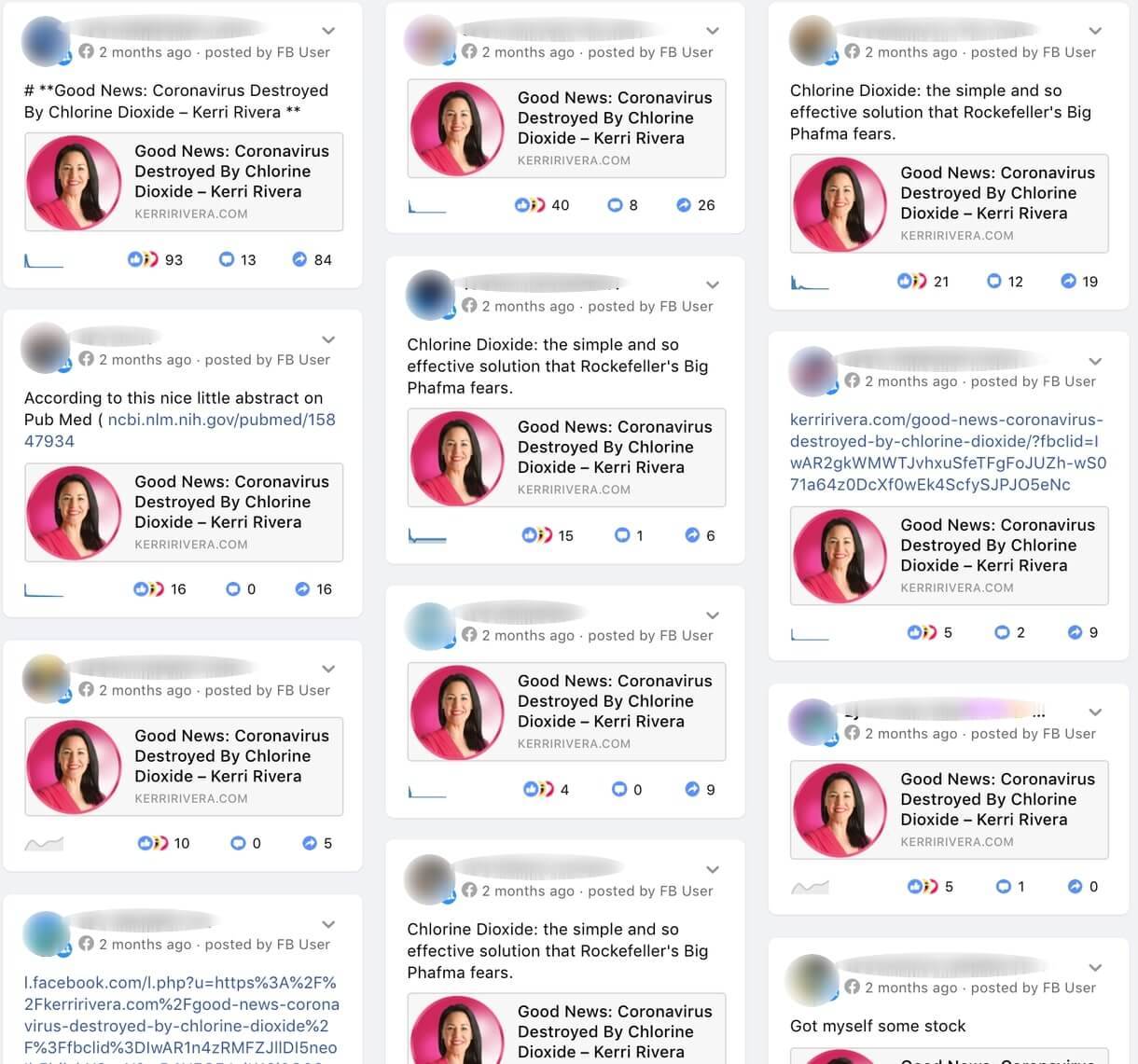

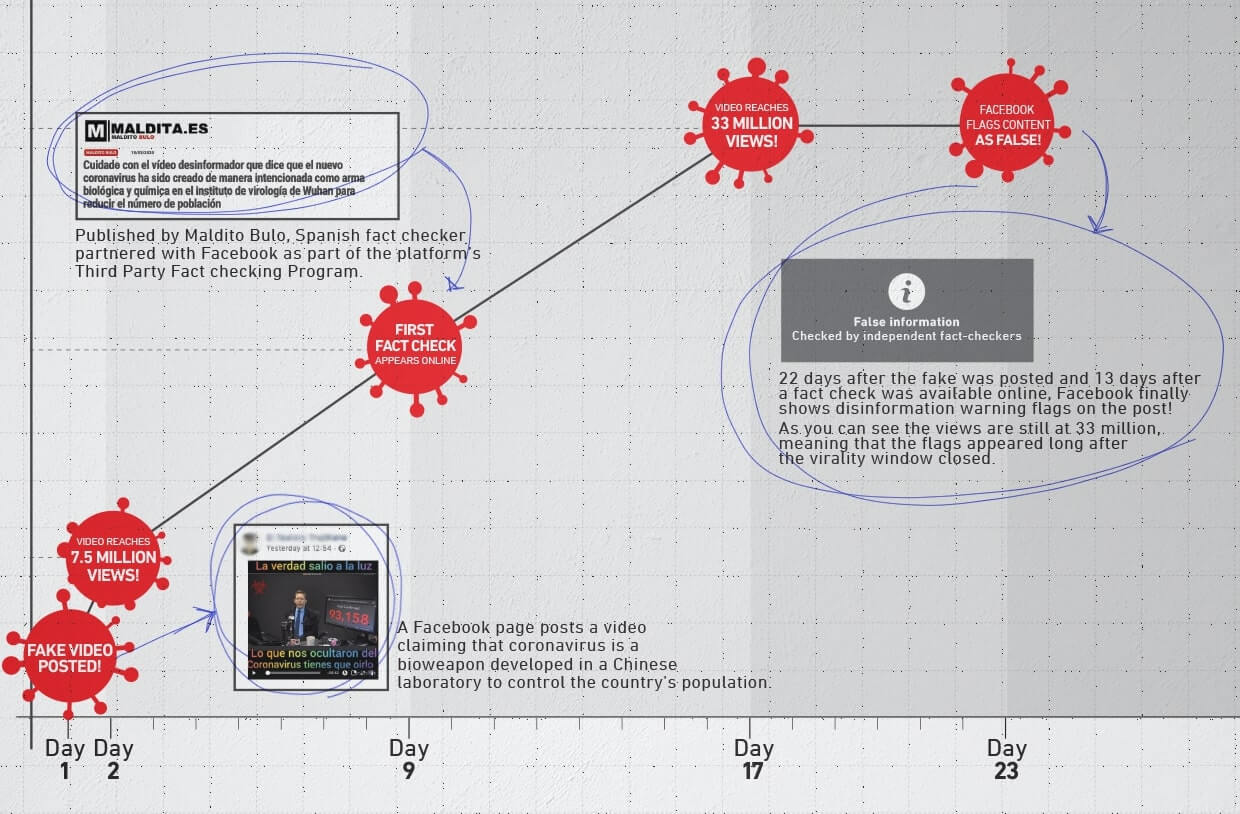

Secondly, Avaaz found that there are significant delays in Facebook’s implementation of its anti-misinformation policies. These delays are especially troubling because they result in millions of users seeing harmful misinformation content about the coronavirus before the platform labels it with a fact check and warning screen or removes it. Specifically, we found that it can take up to 22 days for the platform to downgrade and issue warning labels on such content, giving ample time for it to go viral.

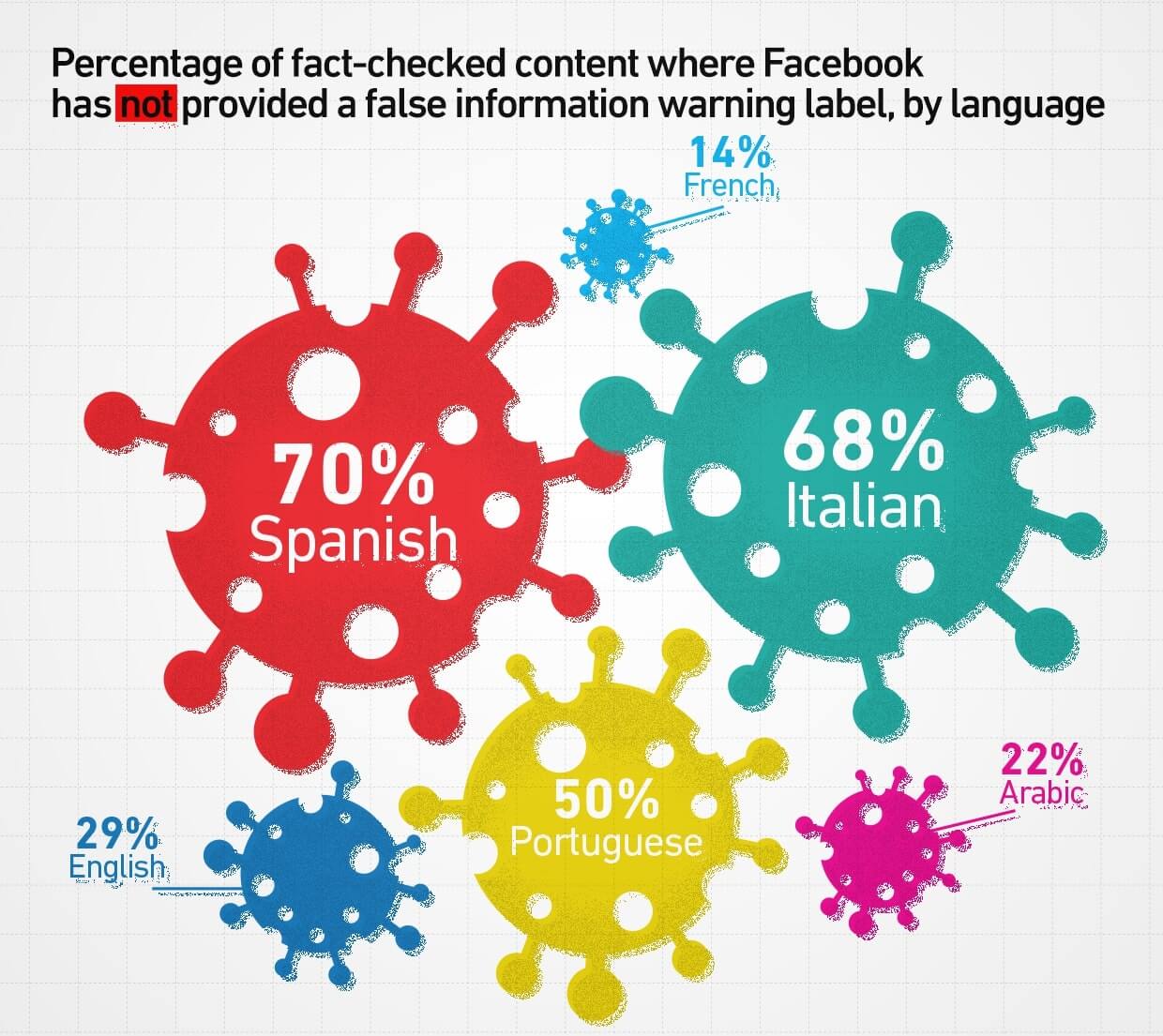

Our analysis also indicates that Italian and Spanish-speaking users may be at greater risk of misinformation exposure. Facebook has not yet issued warning labels on 68% of the Italian-language content and 70% of Spanish-language content we examined, compared to 29% of English-language content.

The scale of this “infodemic” along with Facebook's reluctance to retroactively notify and provide corrections to every user exposed to harmful misinformation about the coronavirus is threatening efforts to “flatten the curve” across the world and could potentially put lives at risk.

In a conversation with members of Facebook’s misinformation team on 13 April 2020, we were strongly encouraged by unprecedented commitments from Facebook to institute retroactive alerts to fight coronavirus misinformation, an important and necessary first step that could potentially save lives7 [By moving to alert those who see misinformation, the platform is now doing more than any of the leading social media platforms to inform users about false content they have encountered and starts fixing many of the policy gaps highlighted above.].

To ensure that content that could cause imminent physical harm to Facebook users was speedily removed by the platform, we shared with the company on 8 April 2020 a list of misinformation posts that we believe violate its policies and recommended that all be removed. In response, Facebook has, as of 14 April 2020, taken down 17 of the posts we flagged, cumulating 2.4 million estimated views.

Tell Your Friends